Which I can also control in the population.

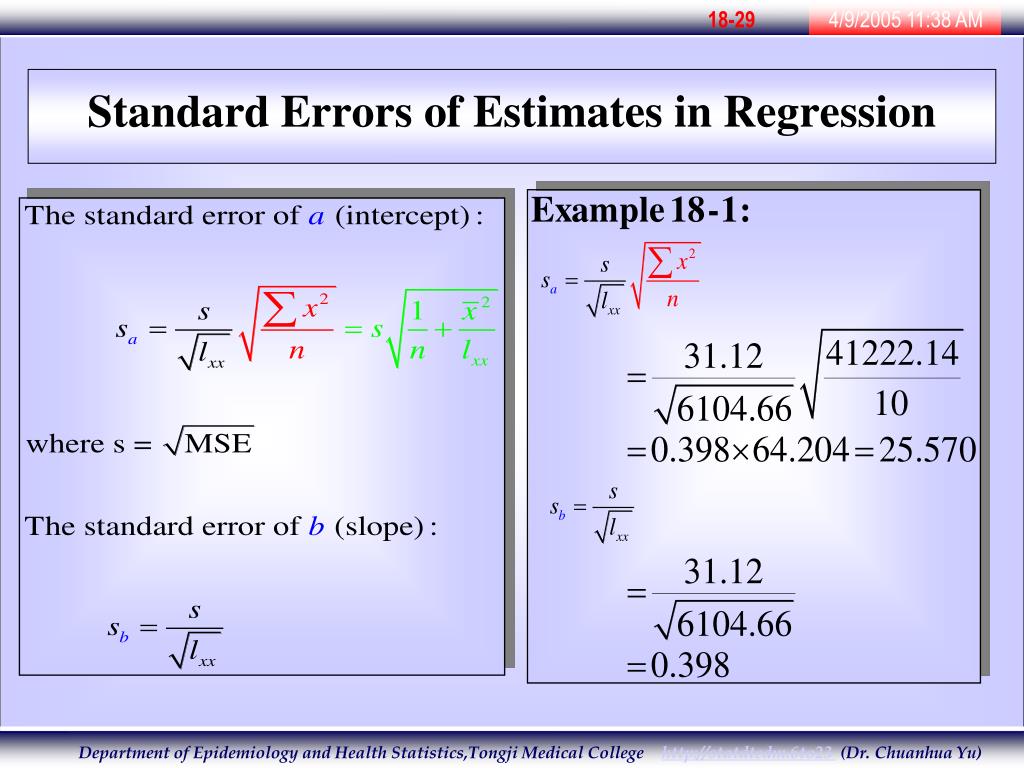

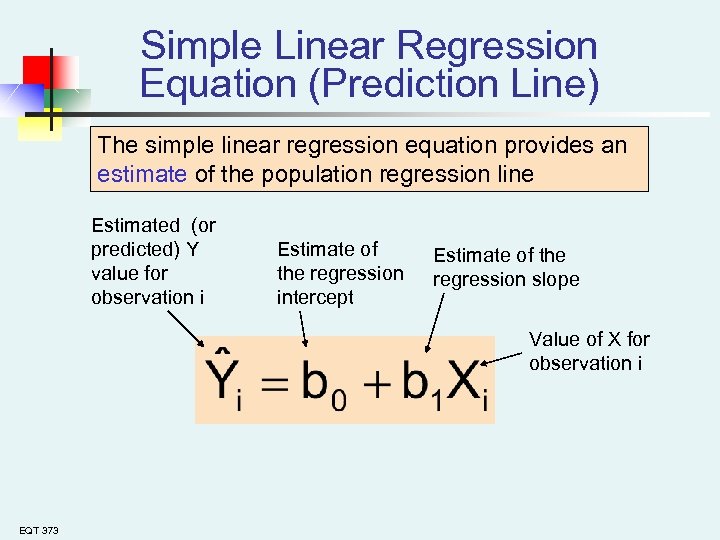

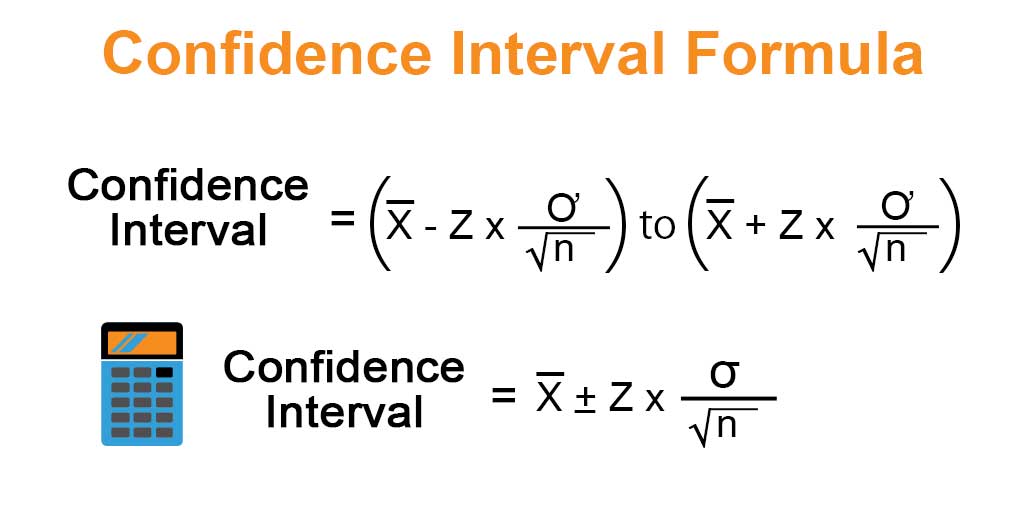

We just need to remember that the SEs come from the diagonal of:Īgain, I have control over and all I need to remember is that for (centered) predictors, is really just the inverse of the variance-covariance matrix of the predictors. But what about multiple OLS regression? Well, I think the multiple regression case is even simpler. The code line e sqrt(var.e/ssq) #population SE There are two elements here that we need to have control over: and. Of the various ways to calculate the SE for the regression coefficient in simple linear regression, I prefer this one for our purposes: Let’s start from the beginning, simple linear regression. Therefore, I reasoned, it makes sense to set these in simulation studies whenever possible so we have a version of what the ‘true’ value would be. Recall that the regression line is the line that minimizes the sum of squared deviations of prediction (also called the sum of squares error). Well, the fact of the matter is that the way standard errors are defined in OLS multiple linear regression do involve population-level quantities. So the question comes to mind: We are well-acquainted with how to simulate data where the parameters are known in the population…but what about their variabilities? Does it even make sense to talk about “population standard errors”? Like… you need to simulate what the population may look like and then you need to simulate what the sampling behaviour may be… too much simulating, LoL. How large is large Your regression software compares the t statistic on your variable with values in the Students t distribution to determine the. If a coefficient is large compared to its standard error, then it is probably different from 0. Note that we can also use the T Score to P Value. The p-value that corresponds to t 1.089 with df n-2 40 2 38 is 0.283. But this approach has always made me feel kinda awkward because it’s like you’re “double-dipping” in your simulation. It can be thought of as a measure of the precision with which the regression coefficient is measured. H0: 1 0 (the slope for hours studied is equal to zero) HA: 1 0 (the slope for hours studied is not equal to zero) We then calculate the test statistic as follows: t b / SEb. So it stands to reason that the average SE should be close to this quantity, even if it was derived empirically through simulation and not analytically. begingroup How would the regression output change if you were, say, to add 106 to each pop value and add -0.0116584times 106 to each fuel value Intuitively, that shifts the data far from pop1029 without altering the regression line and therefore should result in a much wider prediction interval. In layperson’s terms, the standard deviation of the sampling distribution of the coefficients is the standard error. (3) Compare the mean SE to the SD of the coefficients. (2) Calculate the mean of the SEs and the standard deviation of the coefficients. (1) Run your simulation and save both regression coefficients and their standard errors. So…the only way I knew at first about how to assess SEs was in three steps: For the remaining of this article every time I talk about “standard errors” I’m most likely referring to the standard errors of regression coefficients within OLS multiple regression. If they shrink or increase under violations of assumptions then we know something is going to be wrong with our p-values and Type I error rates and yadda-yadda. And a simple way to assess this efficiency is by looking at the estimated standard errors (SEs) of our parameter estimates. When working on ‘robustness-type’ simulations, we are usually not only interested in the unbiasedness and consistency but also the efficiency of our estimators. The resulting confidence interval becomes -0.25 ± 0.09 = (-0.34 -0.16), which is a tighter range of values.This is an interesting insight that I had a couple of weeks ago when I was preparing to teach my class in Monte Carlo simulations. In the sample of 50, the standard deviation was found to be 1.0. Assume that the resulting estimate is -0.20, indicating that for every 1.0 point in the P/E ratio, stocks return 0.2% poorer relative performance. Say that an analyst has looked at a random sample of 50 companies in the S&P 500 to understand the association between a stock's P/E ratio and subsequent 12-month performance in the market.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed